As a Foundation that helps early-stage initiatives develop and validate their programs, Overdeck Family Foundation takes a particular interest in funding evaluations that help nonprofit organizations move up in the Every Student Succeeds Act (ESSA) evidence tiers. Not only does this hold us accountable to allocating our funding to impactful programs, but it can also help grantees unlock access to additional funding and adoption opportunities.

A testable theory of impact is a key component of our funding criteria. As grantmakers, we believe it is our responsibility to help ensure programmatic efforts have a measurable and positive impact before scaling. We know early-stage efforts often struggle to secure the necessary capital to conduct research to validate their ideas, which is why a large amount of our early-stage funding is committed to helping organizations strengthen their evidence base prior to engaging in scaling plans.

That’s where ESSA tiers come in.

Why does ESSA matter?

The ESSA tiers of evidence provide districts and schools with a framework for determining which programs, practices, strategies, and interventions work in which contexts and for which students. Under ESSA, which in 2015 replaced No Child Left Behind, districts and schools have the flexibility to choose interventions that improve student outcomes for student populations that resemble those they serve. The ESSA tiers of evidence make clear how rigorous and well-studied an intervention is, and district and school leaders are encouraged to choose interventions that are evidence-based and have been shown to improve student learning and outcomes.

A testable theory of impact is a key component of our funding criteria.

The ultimate goal is to improve student achievement by introducing interventions that have been shown to work. As a foundation, we believe these goals and a focus on selecting evidence-based interventions and programs are more important than ever, especially in a time of resource constraints.

ESSA tiers range from one to four, and are determined by study design, study results, findings from related studies, sample size and setting, and match. Tier 1 interventions have the strongest evidence for a similar population and setting, while Tier 4 interventions have a well-defined logic model that is based on rigorous research but are still innovating on their own practices. We acknowledge that there is some valid criticism of the ESSA tier framework which ranges from the expense and appropriateness of randomized control trials (RCTs) to the fact that many important and valuable impacts can not be measured in this research context. However, given that government funding for program adoption is often tied to this framework, we believe that the value of ESSA outweighs the drawbacks.

In H1 of 2020, three organizations within our portfolios made significant progress toward strengthening their evidence base and increasing their ESSA tier.

Blended math tutoring

Earlier this year, Saga Education submitted findings from ongoing research on its blended-learning math tutoring model to be evaluated by the Baltimore City District for an ESSA rating. The findings led the district to classify them as a Tier 2 program. Tier 2 programs are those that show “moderate evidence” through a well-designed and implemented quasi-experimental study and statistically significant positive effect on a relevant outcome. This designation will allow the district and its schools to use federal Title funds to secure Saga services for their students, expanding Saga’s reach and impact.

Saga intends to re-share findings on their program in late 2020 when the Match Year 2 study is published by the University of Chicago Education Labs. They believe their ESSA rating will increase to Tier 1 once the publication has been reviewed and assessed by Baltimore City District.

Saga has historically operated a fully in-person high dosage math tutoring model, but over the last two years, they implemented a blended-learning version in around 30 high schools across Chicago, New York City, and Washington DC (and opening in Broward County, Florida this fall). This blended-learning model, still implemented in school, had students split time between in-person tutoring and independent practice via an adaptive learning online math platform. Because it allowed tutors to work with more students, it was more cost-effective than Saga’s traditional program, allowing district dollars to stretch further.

In the traditional model, researchers found average math gains of one- to two-and-a-half extra years of learning. Early findings from the two-year RCT on the blended-learning model are encouraging: analysis suggests that program gains in the blended-learning model are comparable to gains shown in past studies of the traditional model. The blended-learning study is funded by Overdeck Family Foundation and Arnold Ventures, and is being led by the University of Chicago.

During COVID-19 school closures, Saga, which serves over 2,500 students, the vast majority of whom are students of color, quickly pivoted to fully online tutoring to allow tutors to connect with students as before, only on computers versus in-person. This fully online model still has to be studied, but shows promise during a time of school shutdowns and learning loss.

Closing the literacy gap

In the Early Impact Portfolio, an external evaluation of Springboard Collaborative’s summer program, which aims to close the Pre-K through 3rd-grade literacy gap through parent engagement, resulted in evidence that meets a Tier 2 rating, meaning the model is now supported by one or more well-designed and well-implemented quasi-experimental studies. Previously, Springboard had strong programmatic data but did not have an external study that looked at its impact.

Springboard Summer is an intensive, five-week literacy program that combines daily reading instruction for Pre-K through 3rd graders with weekly workshops that train parents to teach reading at home. It also includes professional development for teachers and an incentive structure that awards learning tools—from books to tablets—in proportion to reading progress.

The evaluation, conducted by McClanahan Associates and impactED, showed that, across all grades (K-3), students who participated in Springboard Summer showed improvement on their reading assessment score between the end of the school year before Springboard Summer to the start of the following school year. The largest gains were for students behind grade level.

Additionally, Springboard Summer students show larger improvements in reading scores when compared to similar students who did not participate, suggesting that Springboard played a significant role in their gains.

The Tier 2 rating will allow districts to use federal funds to pay for Springboard’s programs, ideally bringing the intervention to more children and families.

One immediate strategy Springboard is pursuing for additional scale is a partnership with Teach for America this summer. During the months of June and July, students in grades PreK through 4 will work virtually with a Teach For America corps member toward measurable reading goals. Springboard is offering this free program to 8,400 students in an effort to bridge the literacy gap, which research shows has likely widened due to the COVID-19 pandemic.

Incorporating SEL into academics

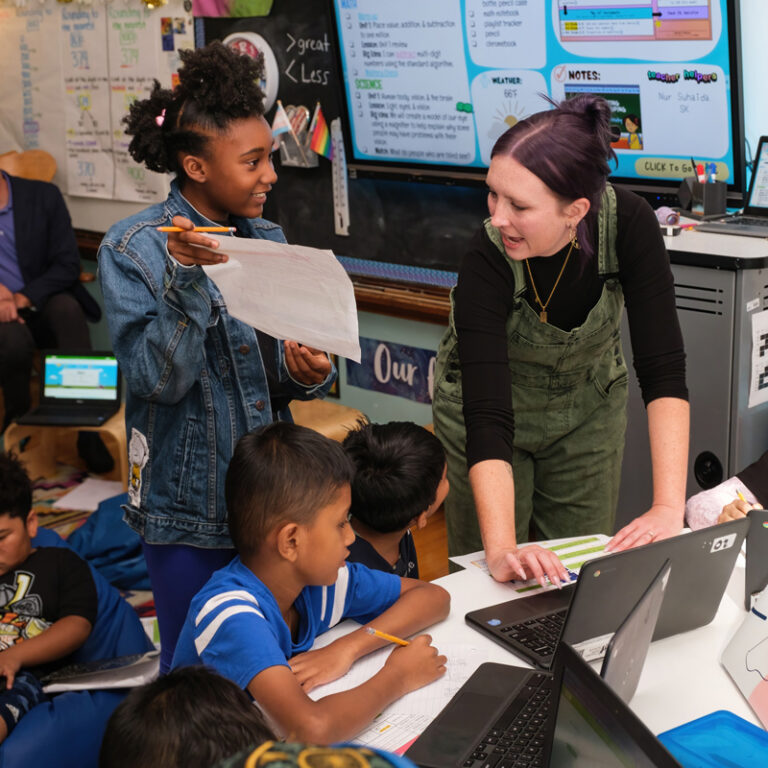

Courtesy of Valor Collegiate Academies

In 2016, Valor Collegiate Academies launched Compass Camp, a program to share part of its approach to comprehensive human development with other schools around the country, including both SEL habit-aligned curriculum and a practice called Circle. With the support of Transcend Education, Valor began building an internal system for collecting and analyzing data to inform the continuous improvement of Compass Camp, but did not have an external research team to create systems for measuring impact.

Given that over 50 schools had already started engaging in Compass Camp, we felt it was important to better understand the impact of this program on student achievement and teacher practice. With the support of Overdeck Family Foundation, Valor put out an RFP in late 2018 for additional capacity to study Compass’s implementation and impact, and to help turbocharge their approach to building a robust evidence base over time. In March 2019, the team selected UVA’s Curry School of Education and Human Development, with Sara Rimm-Kaufman and Shereen El-Mallah as co-principal investigators, to lead the evaluation.

The research team has been working with Valor to refine Compass Camp’s theory of change and to measure the Compass program’s impact on students and teachers, including teacher retention and student academic outcomes. The early work with UVA has resulted in Compass being evaluated at a Tier 4 evidence rating, which is the tier designed to foster innovation and spur research on promising practices. By definition, Tier 4 interventions have a well-defined logic model describing the theory of change, and the content of the theory of change aligns with what existing educational research says about what works. By the end of the two-year partnership with UVA, Valor aims to have more rigorous data collection and stronger demonstrable impact, leading toward evidence that meets a Tier 3 rating. This would be an impressive achievement for a program that is only four years old.

We are proud to support the work of these three grantees and remain committed to helping all our grantee partners develop and scale evidence-based interventions that measurably improve education for students across the United States. We hope other funders consider the benefits of research and evaluation prior to scale, and look forward to partnering with other foundations to continue to make this important work part of philanthropy’s efforts on a larger scale.